So, we did that and things seem to be running smoothly. The next step is to figure out why Go 1.20 is being slow.

Initially, I suspected it could be something to do with cgo. In systems without a C compiler, for some modules of the stdlib the implementation now uses pure Go instead of relying on C. But if there is a C compiler available, the old modules should be used.

However, even when using images that had a C compiler available and setting CGO_ENABLED=1, things are slow.

I then put some timings inside the test runner to see exactly where the time was being lost:

1.20.2-bullseye with the env vars for cgo set:

$ time docker container run -a stdout -a stderr --stop-timeout 0 --rm -v /Users/andre/lab/hello-world:/mnt/exercism-iteration -l exercism-meta exercism/test-runner:1.20.2-bullseye-cgo hello-world /mnt/exercism-iteration/ /mnt/exercism-iteration/

2023/03/17 20:39:44 Checking args 11.713µs

2023/03/17 20:39:44 Parsing exercise config took 9.080198ms

2023/03/17 20:39:58 testCmd.Run() took 13.557003663s

2023/03/17 20:39:58 Running tests took 13.557117492s

2023/03/17 20:39:58 Getting structure took 7.914157ms

2023/03/17 20:39:58 Marshalling took 75.356µs

2023/03/17 20:39:58 Executing tests 13.574476829s

2023/03/17 20:39:58 Writing results 11.859595ms

2023/03/17 20:39:58 Test runner took 13.58681567s

docker container run -a stdout -a stderr --stop-timeout 0 --rm -v -l 0.08s user 0.06s system 0% cpu 15.542 total

1.19.7-bullseye with the exact same conditions:

time docker container run -a stdout -a stderr --stop-timeout 0 --rm -v /Users/andre/lab/hello-world:/mnt/exercism-iteration -l exercism-meta exercism/test-runner:1.19.7-bullseye-cgo hello-world /mnt/exercism-iteration/ /mnt/exercism-iteration/

2023/03/17 20:44:01 Checking args 13.02µs

2023/03/17 20:44:01 Parsing exercise config took 6.497086ms

2023/03/17 20:44:03 testCmd.Run() took 1.991614259s

2023/03/17 20:44:03 Running tests took 1.991730087s

2023/03/17 20:44:03 Getting structure took 13.368996ms

2023/03/17 20:44:03 Marshalling took 150.848µs

2023/03/17 20:44:03 Executing tests 2.011823182s

2023/03/17 20:44:03 Writing results 21.412597ms

2023/03/17 20:44:03 Test runner took 2.033506323s

docker container run -a stdout -a stderr --stop-timeout 0 --rm -v -l 0.08s user 0.05s system 3% cpu 3.401 total

The interesting thing to note here is that the difference in time between 1.19 and 1.20 is in the call testCmd.Run(). This is when we “shell out” to run go test. Everything else looks normal and no differences are noticeable between versions.

However, running the test runner locally outside docker with Go 1.20 (mac) doesn’t show the same problem:

$ ./go-test-runner /Users/andre/lab/hello-world /Users/andre/lab/hello-world

2023/03/17 23:04:28 Checking args 18.503µs

2023/03/17 23:04:28 Parsing exercise config took 914.321µs

2023/03/17 23:04:28 testCmd.Run() took 697.718911ms

2023/03/17 23:04:28 Running tests took 697.857369ms

2023/03/17 23:04:28 Getting structure took 1.090841ms

2023/03/17 23:04:28 Marshalling took 65.432µs

2023/03/17 23:04:28 Executing tests 699.98051ms

2023/03/17 23:04:28 Writing results 1.461088ms

2023/03/17 23:04:28 Test runner took 701.635727ms

In fact, attaching directly to the container and running the test runner there also shows similar results to running locally:

$ docker run -it -v /Users/andre/lab/hello-world:/mnt/exercism-iteration --user appuser --entrypoint /bin/bash exercism/test-runner:1.20.2-bullseye-cgo

appuser@17b5fc365cf7:/opt/test-runner$ go version

go version go1.20.2 linux/amd64

appuser@17b5fc365cf7:/opt/test-runner$ bin/test-runner /mnt/exercism-iteration/ /mnt/exercism-iteration/

2023/03/17 22:22:50 Checking args 33.339µs

2023/03/17 22:22:50 Parsing exercise config took 11.801936ms

2023/03/17 22:22:51 testCmd.Run() took 1.827009028s

2023/03/17 22:22:51 Running tests took 1.827114131s

2023/03/17 22:22:51 Getting structure took 4.361865ms

2023/03/17 22:22:51 Marshalling took 64.518µs

2023/03/17 22:22:51 Executing tests 1.843516918s

2023/03/17 22:22:51 Writing results 5.259687ms

2023/03/17 22:22:51 Test runner took 1.849310084s

It seems the problem it’s not with Go 1.20, but some other docker configuration that might be making things slow.

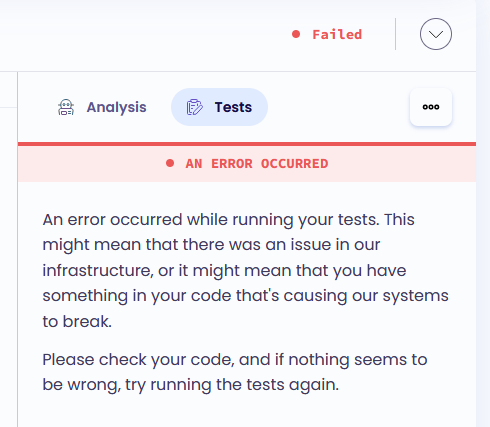

![]()

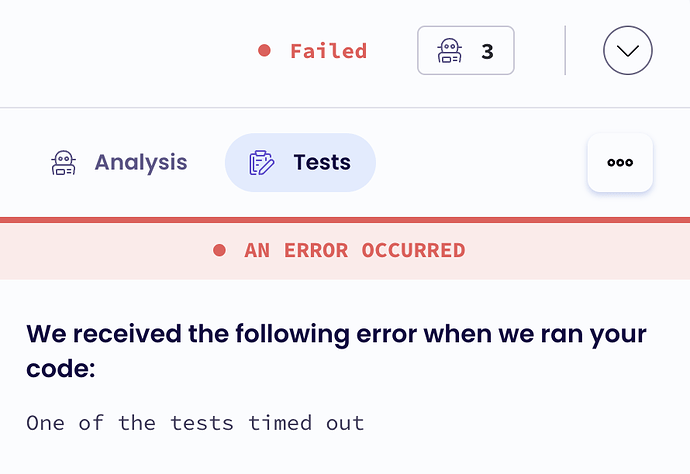

![]()

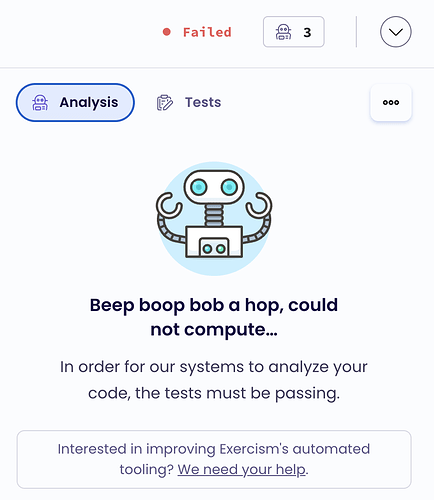

![]()